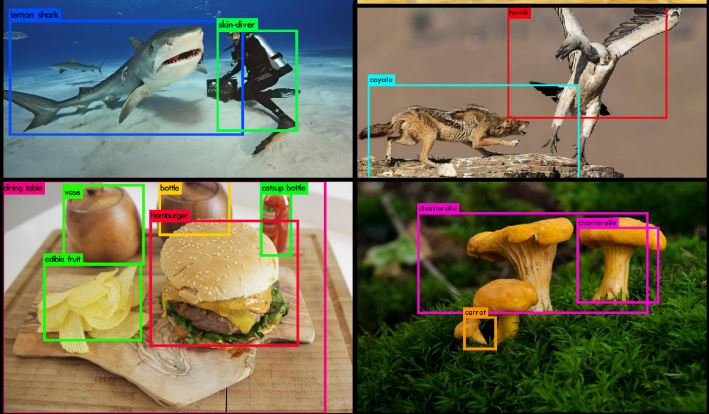

YOLO (You Solely Look As soon as) is a household of object detection fashions common for his or her real-time processing capabilities, delivering excessive accuracy and pace on cellular and edge units. Launched in 2020, YOLOv4 enhances the efficiency of its predecessor, YOLOv3, by bridging the hole between accuracy and pace.

Whereas lots of the most correct object detection fashions require a number of GPUs operating in parallel, YOLOv4 could be operated on a single GPU with 8GB of VRAM, such because the GTX 1080 Ti, which makes widespread use of the mannequin attainable.

On this weblog, we are going to look deeper into the structure of YOLOv4 what adjustments have been made that made it attainable to be run in a single GPU, and at last take a look at a few of its real-life purposes.

The YOLO Household of Fashions

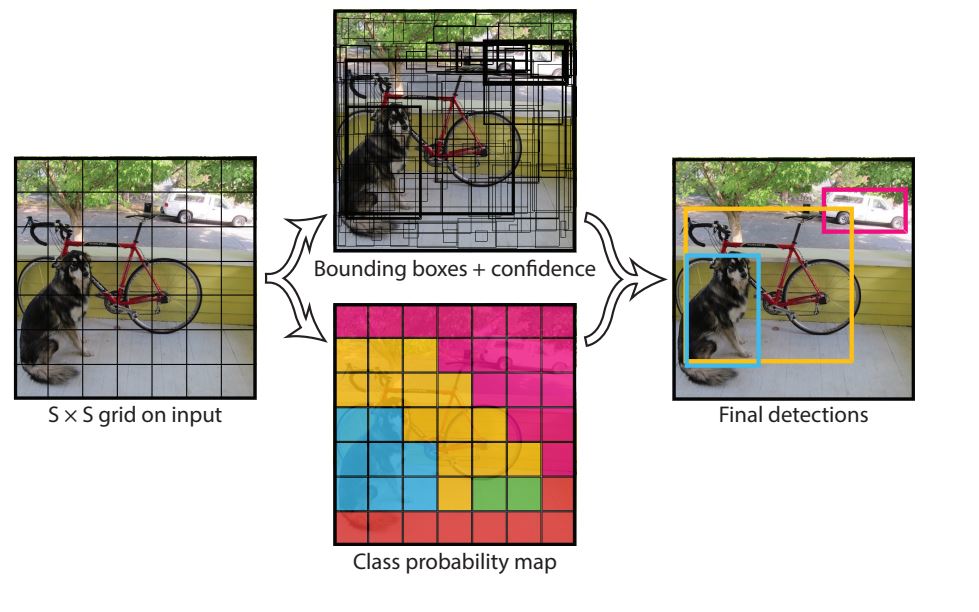

The primary YOLO mannequin was launched again in 2016 by a group of researchers, marking a big development in object detection expertise. In contrast to the two-stage fashions common on the time, which have been sluggish and resource-intensive, YOLO launched a one-stage strategy to object detection.

YOLOv1

The structure of YOLOv1 was impressed by GoogLeNet and divided the enter dimension picture right into a 7×7 grid. Every grid cell predicted bounding packing containers and confidence scores for a number of objects in a single cross. With this, it was capable of run at over 45 frames per second and made real-time purposes attainable. Nonetheless, accuracy was poorer in comparison with two-stage fashions corresponding to Sooner RCNN.

YOLOv2

The YOLOv2 object detector mannequin was Launched in 2016 and improved upon its predecessor with higher accuracy whereas sustaining the identical pace. Probably the most notable change was the introduction of predefined anchor packing containers into the mannequin for higher bounding field predictions. This alteration considerably improved the mannequin’s imply common precision (mAP), significantly for smaller objects.

YOLOv3

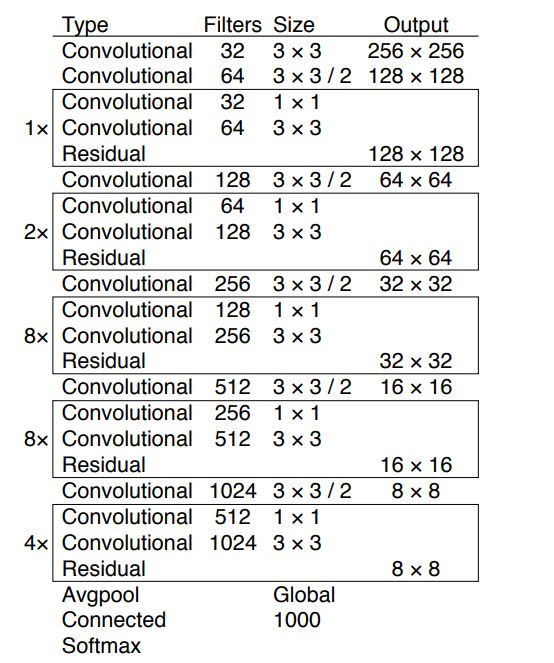

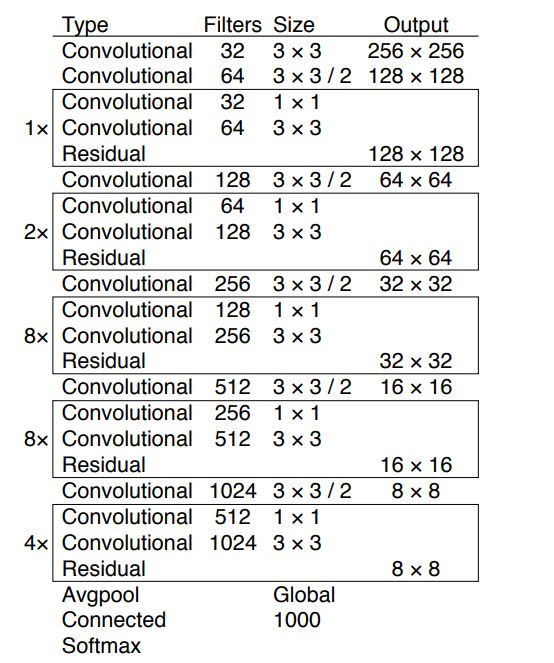

YOLOv3 was launched in 2018 and launched a deeper spine community, the Darknet-53, which had 53 convolutional layers. This deeper community helped with higher characteristic extraction. Moreover, it launched Objectness scores for bounding packing containers (predicting whether or not the bounding field incorporates an object or background). Moreover, the mannequin launched Spatial Pyramid Pooling (SPP), which elevated the receptive discipline of the mannequin.

Key Innovation launched in YOLOv4

YOLOv4 improved the effectivity of its predecessor and made it attainable for it to be skilled and run on a single GPU. General the structure adjustments made all through the YOLOv4 are as follows:

- CSPDarknet53 as spine: Changed the Darknet-53 spine utilized in YOLOv3 with CSP Darknet53

- PANet: YOLOv4 changed the Characteristic Pyramid Community (FPN) utilized in YOLOv3 with PANet

- Self-Adversarial Coaching (SAT)

Moreover, the authors did in depth analysis on discovering one of the simplest ways to coach and run the mannequin. They categorized these experiments as Bag of Freebies (BoF) and Bag of Specials (BoS).

Bag of Freebies are adjustments that happen throughout the coaching course of solely and assist enhance the mannequin efficiency, subsequently it solely will increase the coaching time whereas leaving the inference time the identical. Whereas, Bag of Specials introduces adjustments that barely enhance inference computation necessities however provide larger accuracy acquire. With this, a person may choose what freebies they should use whereas additionally being conscious of its prices by way of coaching time and inference pace towards accuracy.

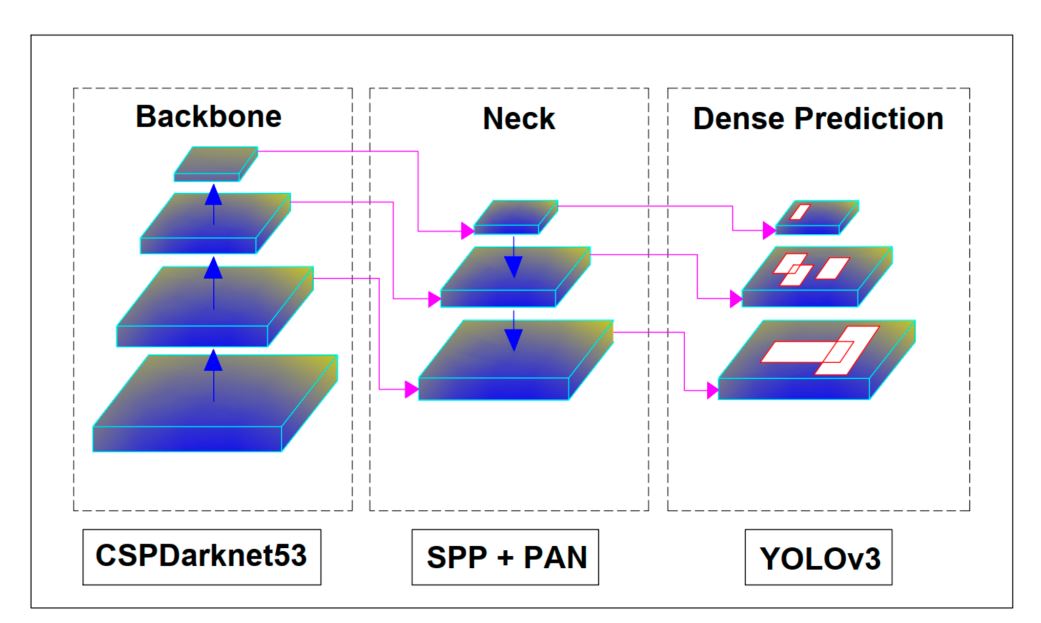

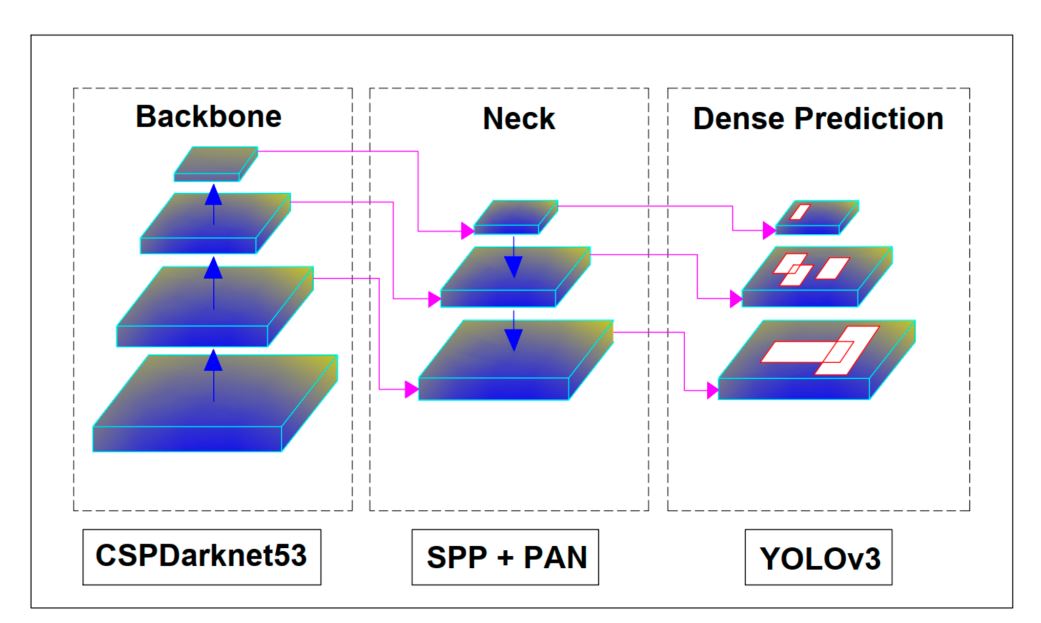

Structure of YOLOv4

Though the structure of YOLOv4 appears advanced at first, total the mannequin has the next most important elements:

- Spine: CSPDarkNet53

- Neck: SSP + PANet

- Head: YOLOv3

Other than these, all the pieces is left as much as the person to determine what they should use with Bag of Freebies and Bag of Specials.

CSPDarkNet53 Spine

A Spine is a time period used within the YOLO household of fashions. In YOLO fashions, the only objective is to extract options from photos and cross them ahead to the mannequin for object detection and classification. The spine is a CNN structure made up of a number of layers. In YOLOv4, the researchers additionally provide a number of selections for spine networks, corresponding to ResNeXt50, EfficientNet-B3, and Darknet-53 which has 53 convolution layers.

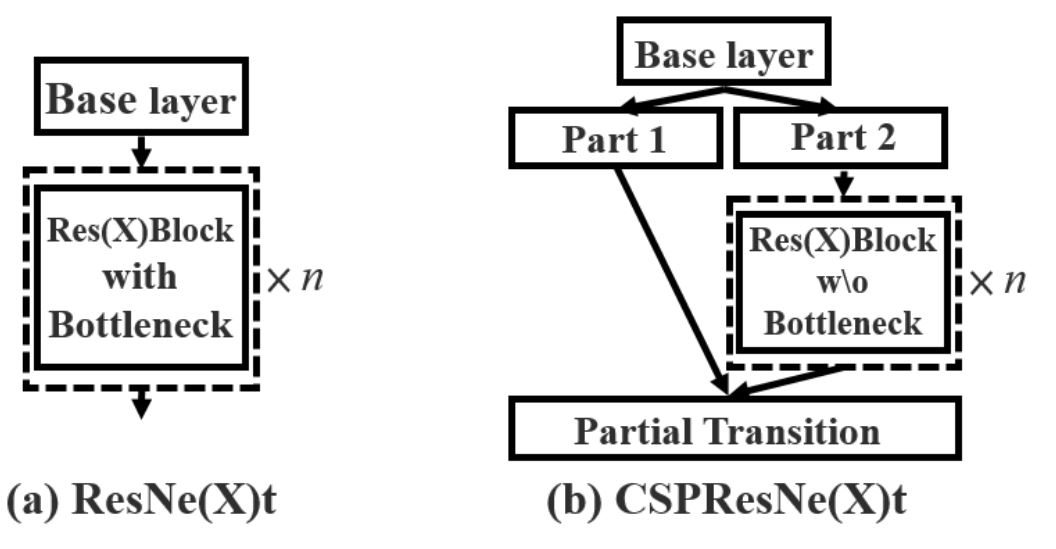

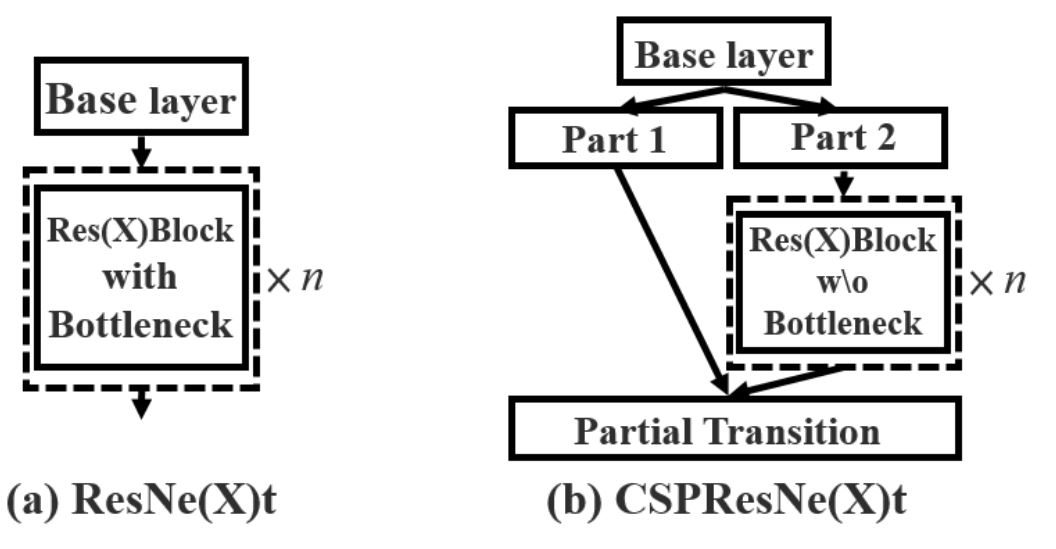

YOLOv4 makes use of a modified model of the unique Darknet-53, referred to as CSPNetDarkNet53, and is a crucial part of YOLOv4. It builds upon the Darknet-53 structure and introduces a Cross-Stage Partial (CSP) technique to boost efficiency in object detection duties.

The CSP technique divides the characteristic maps into two elements. One half flows via a sequence of residual blocks whereas the opposite bypasses them, and concatenates them later within the community. Though Darknet (impressed by ResNet makes use of the same design within the type of residual connections) the distinction lies as well as and concatenation. Residual connections add characteristic maps, whereas CSP concatenates them.

Concatenation characteristic maps facet by facet alongside the channel dimension enhance the variety of channels. For instance, concatenating two characteristic maps every with 32 channels ends in a brand new characteristic map with 64 channels. As a result of this nature, options are preserved higher and improves the thing detection mannequin accuracy.

Additionally, the CSP technique makes use of much less RAM, as half of the characteristic maps undergo the community. As a result of this, CSP methods have been proven to scale back computation wants by 20%.

SSP and PANet Neck

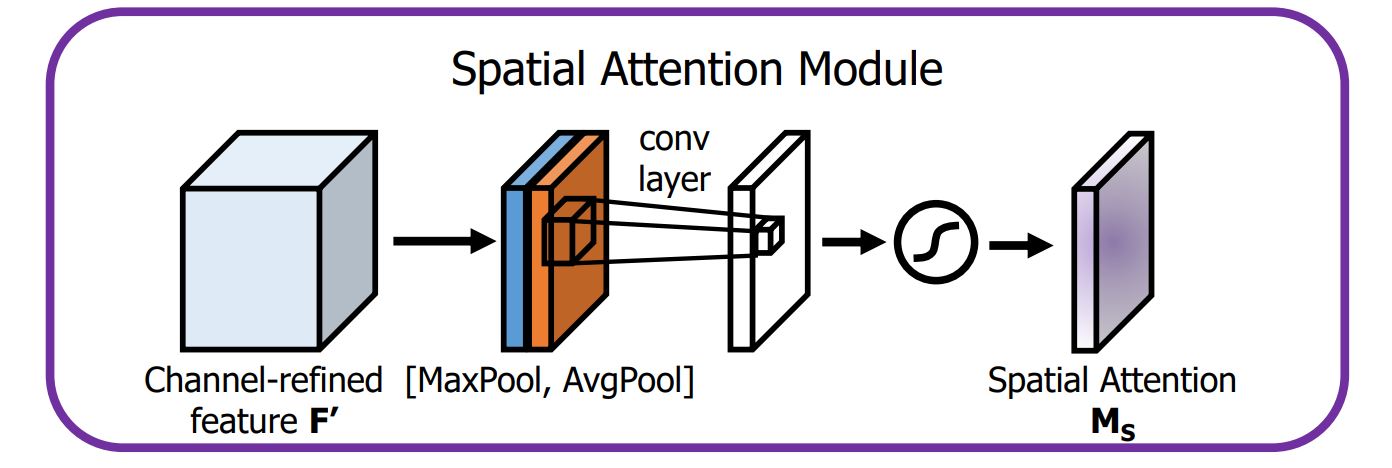

The neck in YOLO fashions collects characteristic maps from completely different phases of the spine and passes them all the way down to the pinnacle. The YOLOv4 mannequin makes use of a customized neck that consists of a modified model of PANet, spatial pyramid pooling (SPP), and spatial consideration module (SAM).

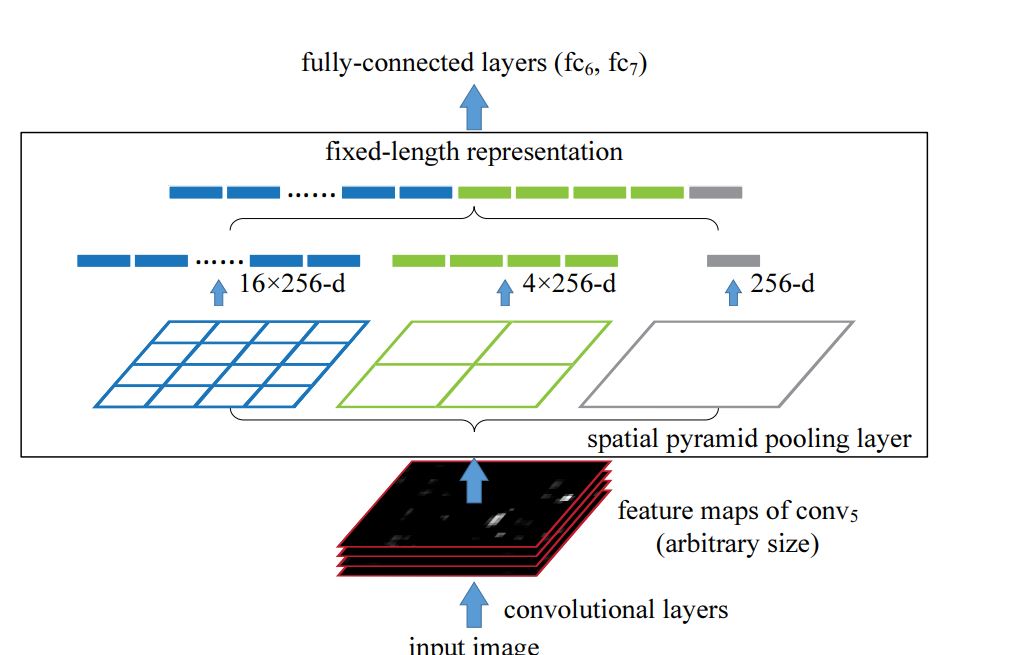

SPP

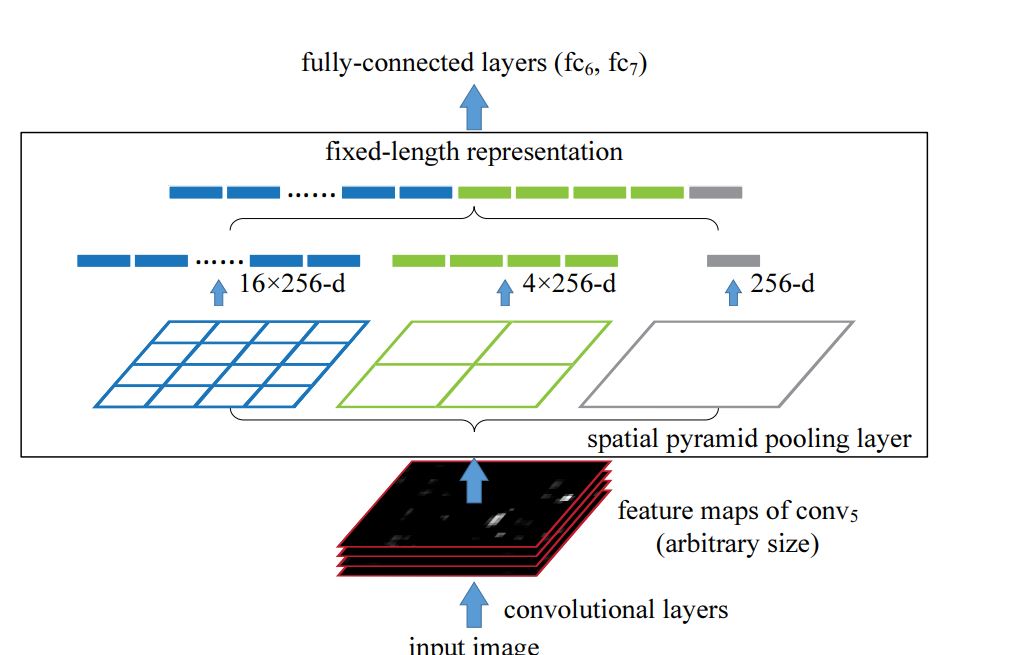

Within the conventional Spatial Pyramid Pooling (SPP), fixed-size max pooling is utilized to divide the characteristic map into areas of various sizes (e.g., 1×1, 2×2, 4×4), and every area is pooled independently. The ensuing pooled outputs are then flattened and mixed right into a single characteristic vector to supply a fixed-length characteristic vector that doesn’t retain spatial dimensions. This strategy is right for classification duties, however not for object detection, the place the receptive discipline is vital.

In YOLOv4 that is modified and makes use of fixed-size pooling kernels with completely different sizes (e.g., 1×1, 5×5, 9×9, and 13×13) however retains the identical spatial dimensions of the characteristic map.

Every pooling operation produces a separate output, which is then concatenated alongside the channel dimension moderately than being flattened. By utilizing massive pooling kernels (like 13×13) in YOLOv4, the SPP block expands the receptive discipline whereas preserving spatial particulars, permitting the mannequin to higher detect objects of assorted sizes (massive and small objects). Moreover, this strategy provides minimal computational overhead, supporting YOLOv4 for real-time detection.

PAN

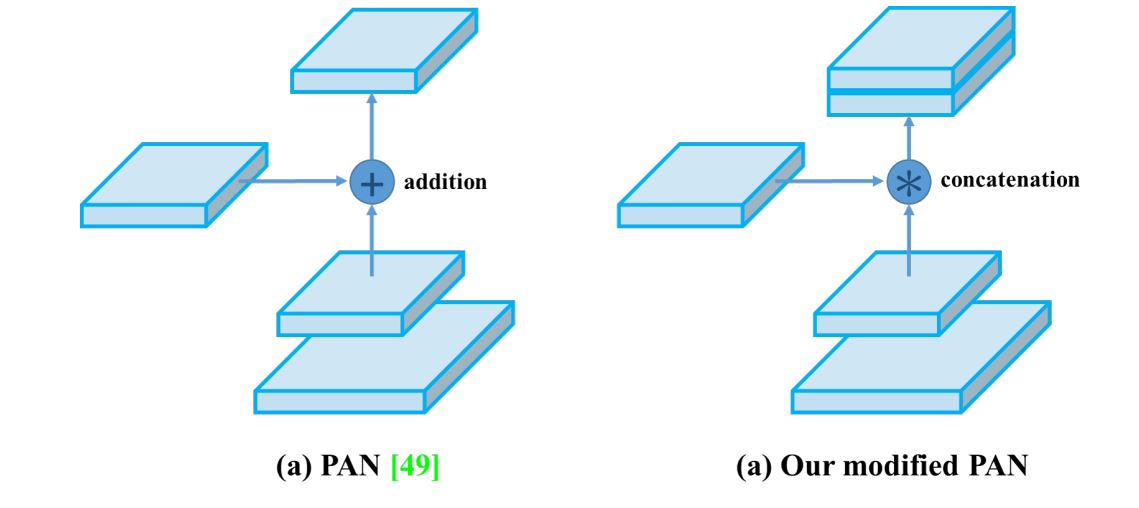

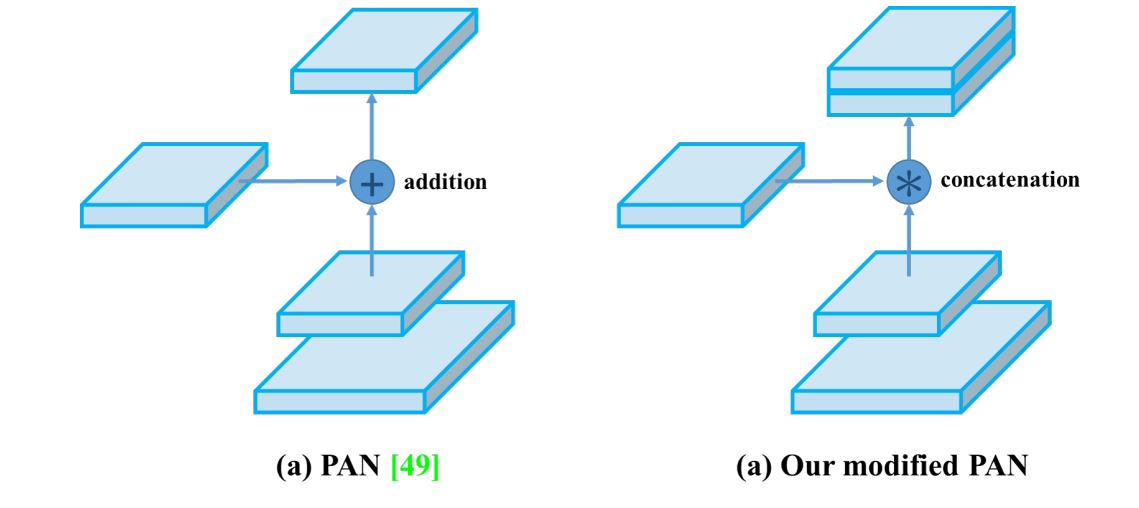

YOLOv3 made use of FPN, nevertheless it was changed with a modified PAN in YOLOv4. PAN builds on the FPN construction by including a bottom-up pathway along with the top-down pathway. This bottom-up path aggregates and passes options from decrease ranges again up via the community, which reinforces lower-level options with contextual info and enriches high-level options with spatial particulars.

Nonetheless, in YOLOv4, the unique PANet was modified and used concatenation as a substitute of aggregation. This permits it to make use of multi-scale options effectively.

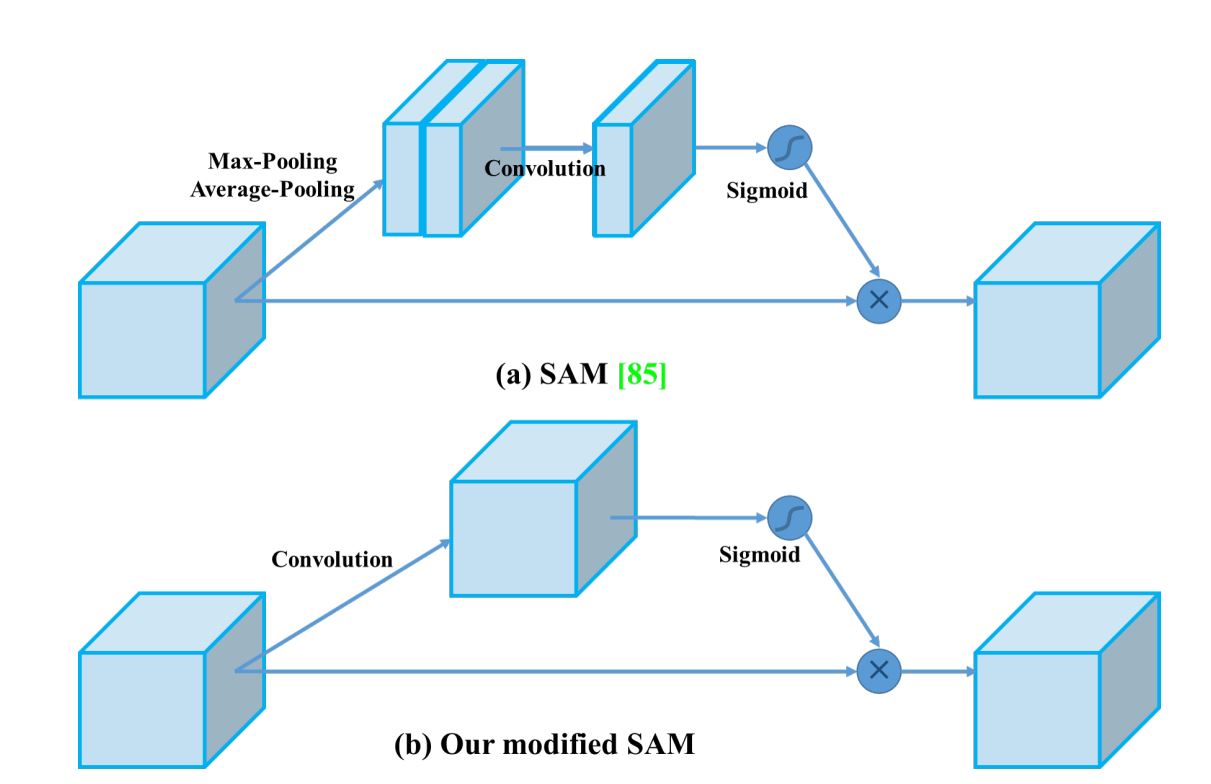

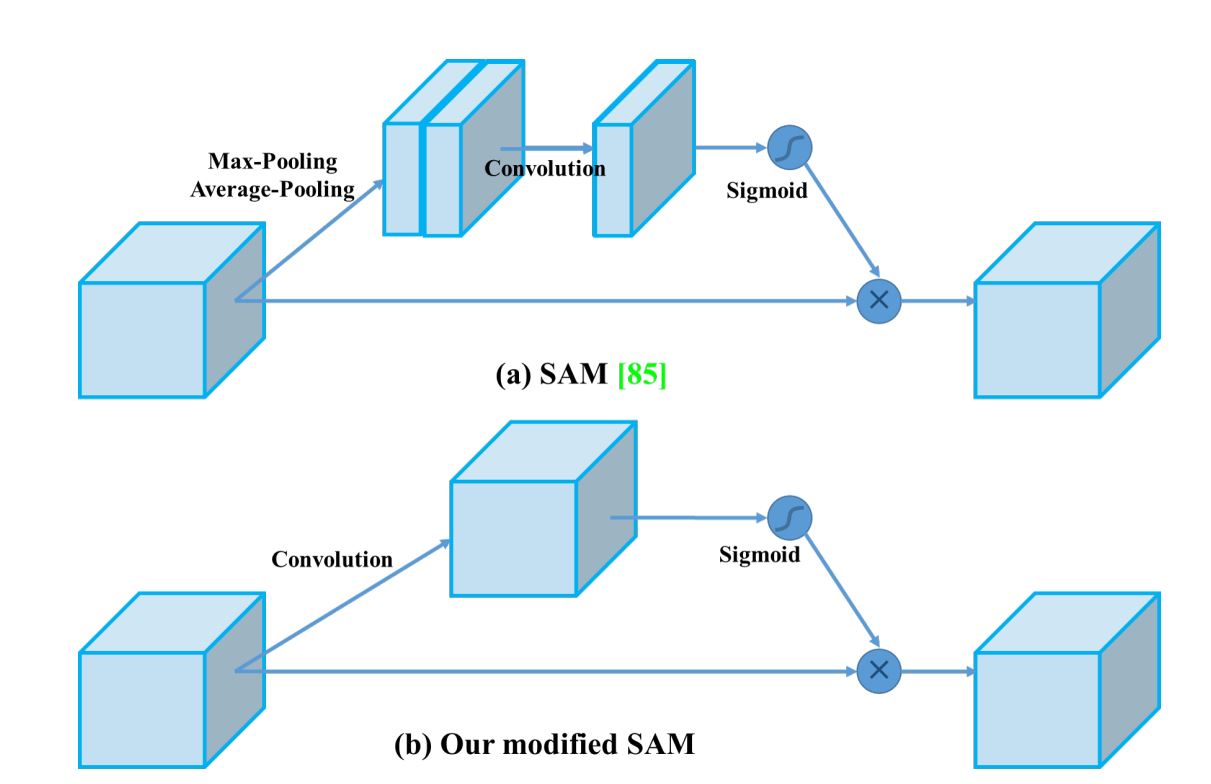

Modified SAM

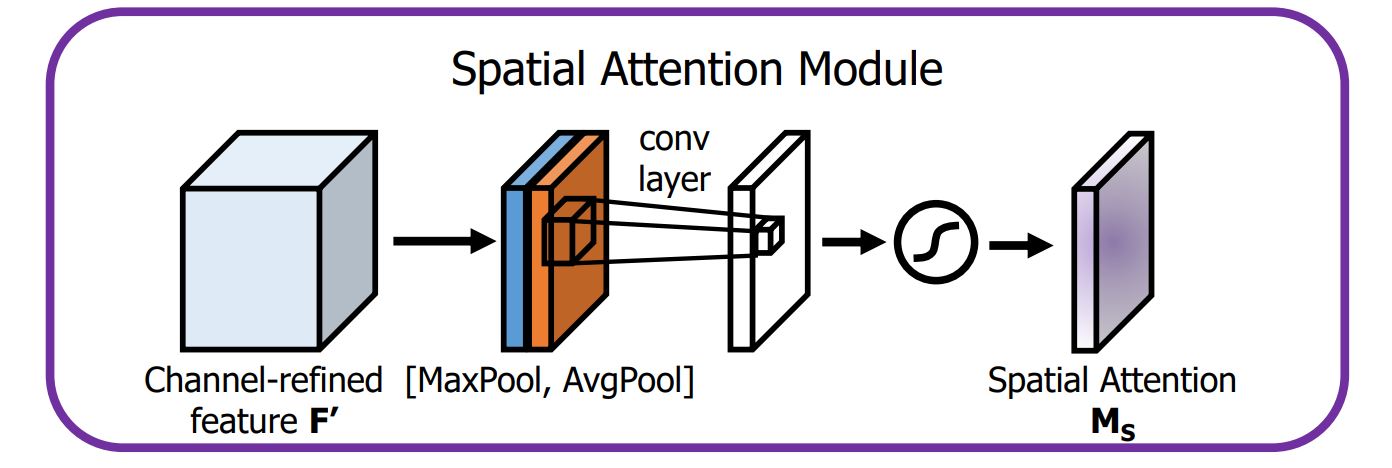

The usual SAM method makes use of each most and common pooling operations to create separate characteristic maps that assist deal with important areas of the enter.

Nonetheless, in YOLOv4, these pooling operations are omitted (as a result of they cut back info contained in characteristic maps). As an alternative, the modified SAM straight processes the enter characteristic maps by making use of convolutional layers adopted by a sigmoid activation perform to generate consideration maps.

How does a Customary SAM work?

The Spatial Consideration Module (SAM) is essential for its position in permitting the mannequin to deal with options which are essential for detection and miserable the irrelevant options.

- Pooling Operation: SAM begins by processing the enter characteristic maps in YOLO layers via two kinds of pooling operations—common pooling and max pooling.

- Common Pooling produces a characteristic map that represents the typical activation throughout channels.

- Max Pooling captures essentially the most important activation, emphasizing the strongest options.

- Concatenation: The outputs from common and max pooling are concatenated to kind a mixed characteristic descriptor. This step outputs each world and native info from the characteristic maps.

- Convolution Layer: The concatenated characteristic descriptor is then handed via a Convolution Neural Community. The convolutional operation helps to study spatial relationships and additional refines the eye map.

- Sigmoid Activation: A sigmoid activation perform is utilized to the output of the convolution layer, leading to a spatial consideration map. Lastly, this consideration map is multiplied element-wise with the unique enter characteristic map.

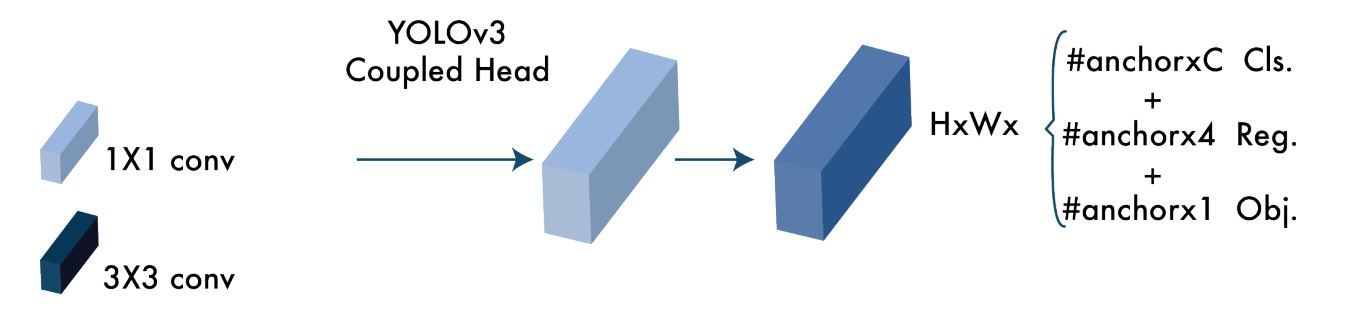

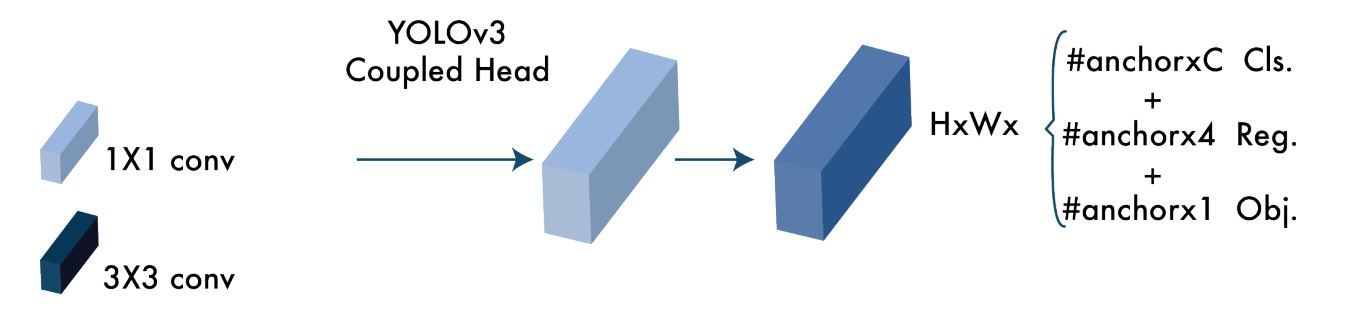

YOLOv3 Head

The top of YOLO fashions is the place object detection and classification occurs. Regardless of YOLOv4 being the successor it retains the pinnacle of YOLOv3, which suggests it additionally produces anchor field predictions and bounding field regression.

Subsequently, we are able to see that the optimizations carried out within the spine and neck of YOLOv4 are the explanation we see a noticeable enchancment in effectivity and pace.

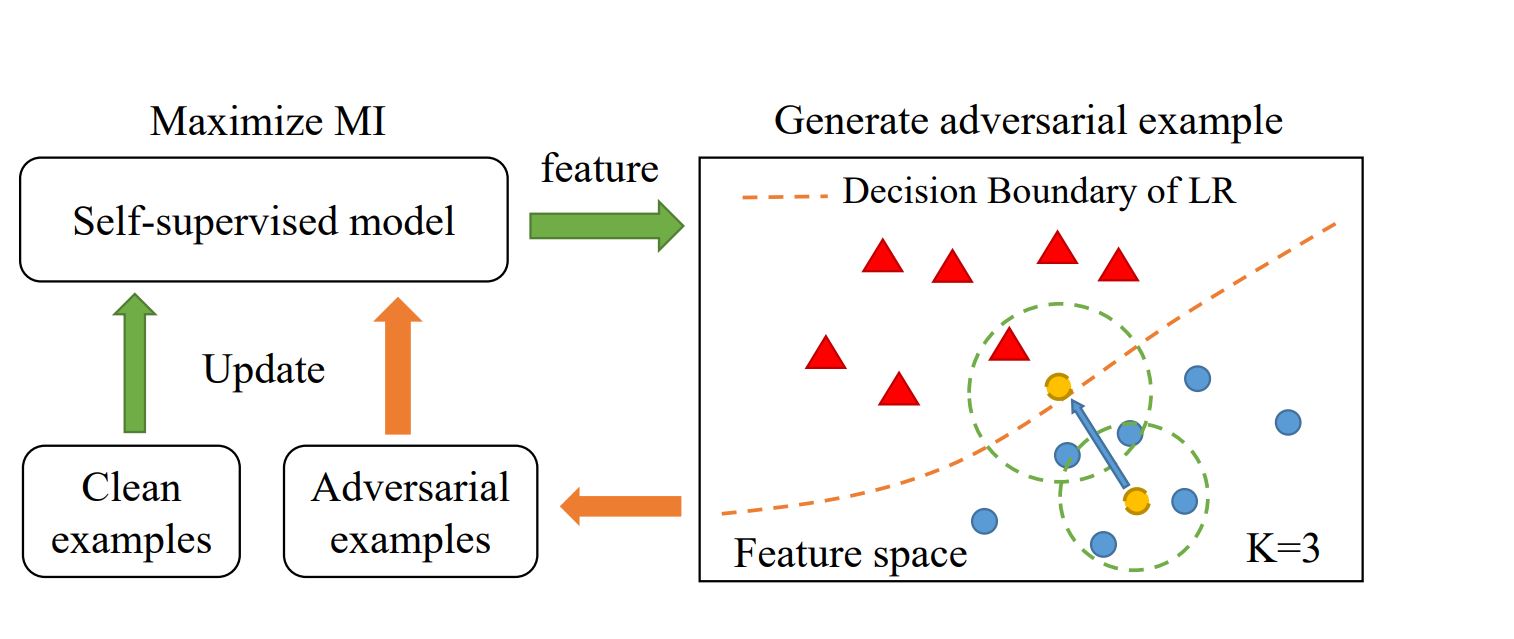

What’s Self-Adversarial Coaching (SAT)

Self Adversarial Coaching (SAT) in YOLOv4 is an information augmentation method utilized in coaching photos (prepare knowledge) to boost the mannequin’s robustness and enhance generalization.

How SAT Works

The essential concept of adversarial coaching is to enhance the mannequin’s resilience by exposing it to adversarial examples throughout coaching. These examples are created to mislead the mannequin into making incorrect predictions.

In step one, the community learns to change the unique picture to make it seem as if the specified object will not be current. Within the second step, the modified photos are used for coaching, the place the community makes an attempt to detect objects in these altered photos towards floor reality. This method intelligently alters photos in such a means that pushes the mannequin to study higher and generalize itself for photos not included within the coaching set.

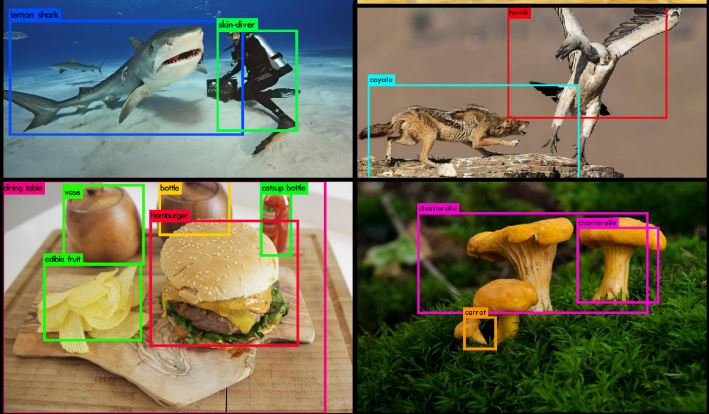

Actual-Life Utility of Utility of YOLOv4

YOLOv4 has been utilized in a variety of purposes and situations, together with use in embedded techniques. A few of these are:

- Harvesting Oil Palm: A bunch of researchers used YOLOv4 paired with a digital camera and laptop computer system with an Intel Core i7-8750H processor and GeForce DTX 1070 graphic card, to detect ripe fruit branches. In the course of the testing part, they achieved 87.9 % imply Common Precision (mAP) and 82 % recall charge whereas operating at a real-time pace of 21 FPS.

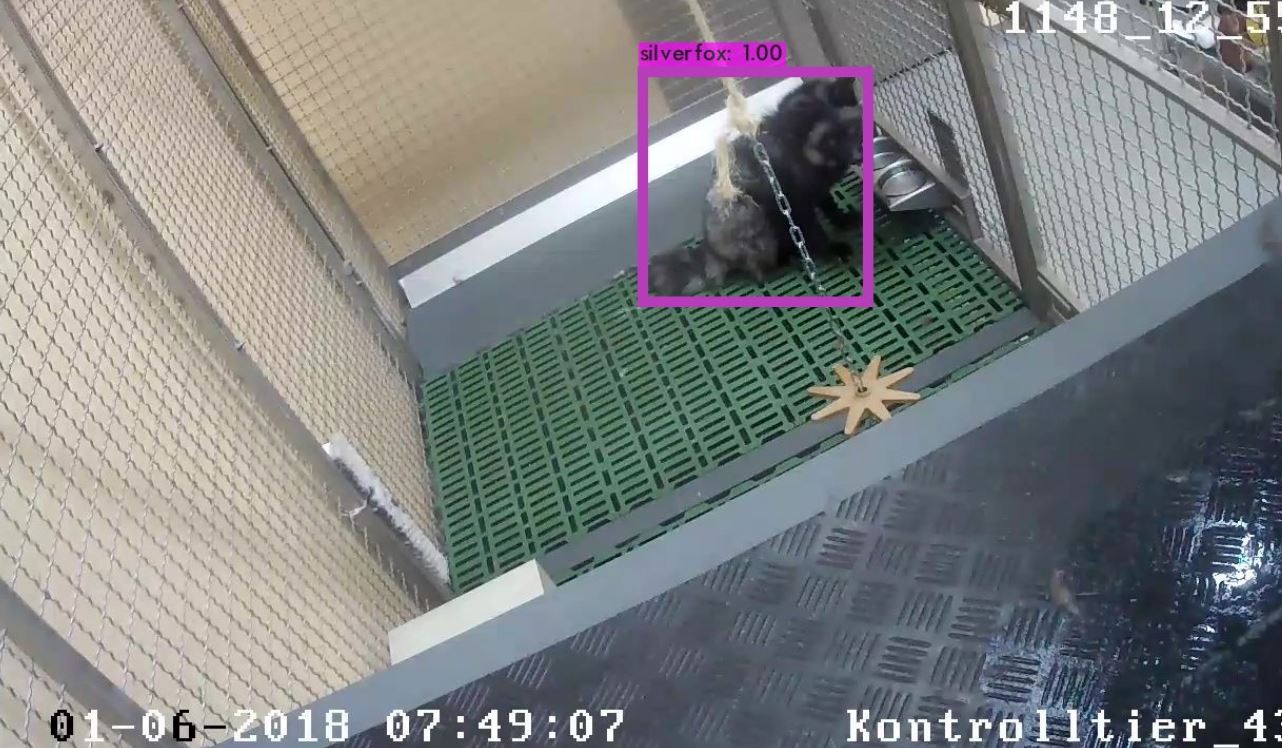

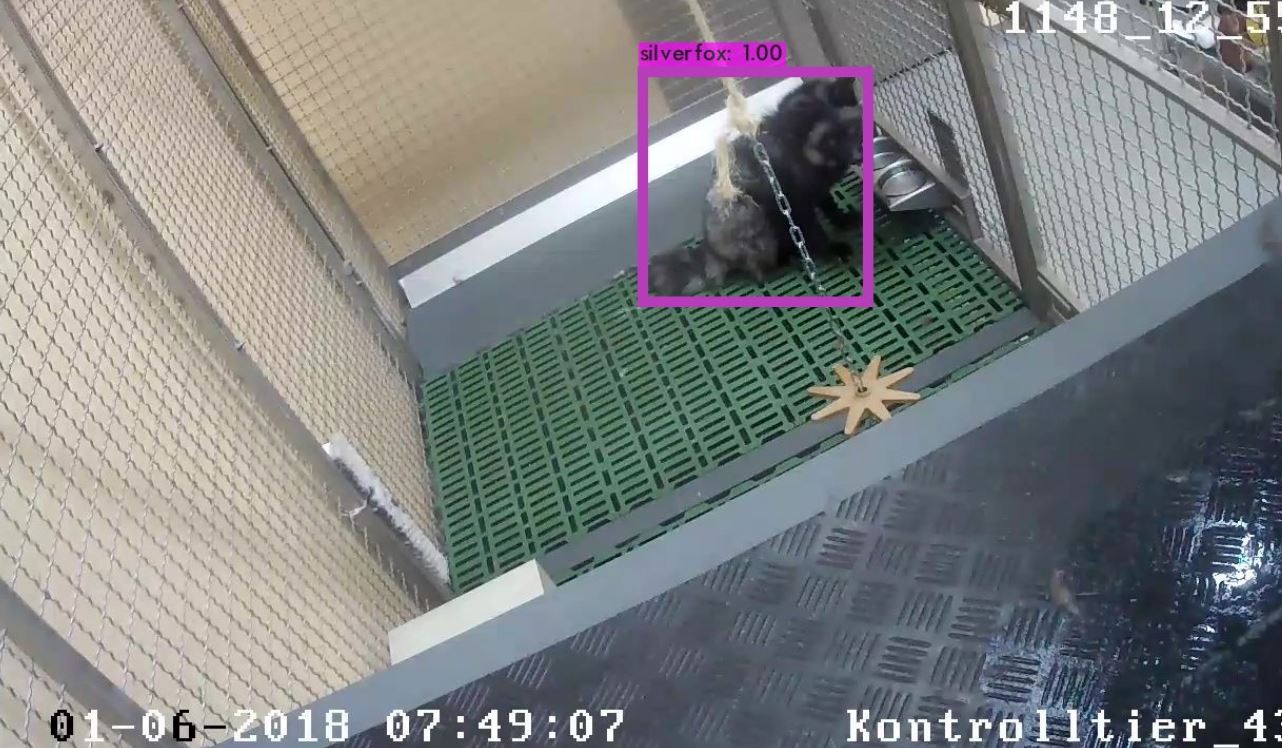

Ripe palm tree detection utilizing YOLOv4 –supply - Animal Monitoring: On this research, the researchers used YOLOv4 to detect foxes and monitor their motion and exercise. Utilizing CV, the researchers have been capable of robotically analyze the movies and monitor animal exercise with out human interference.

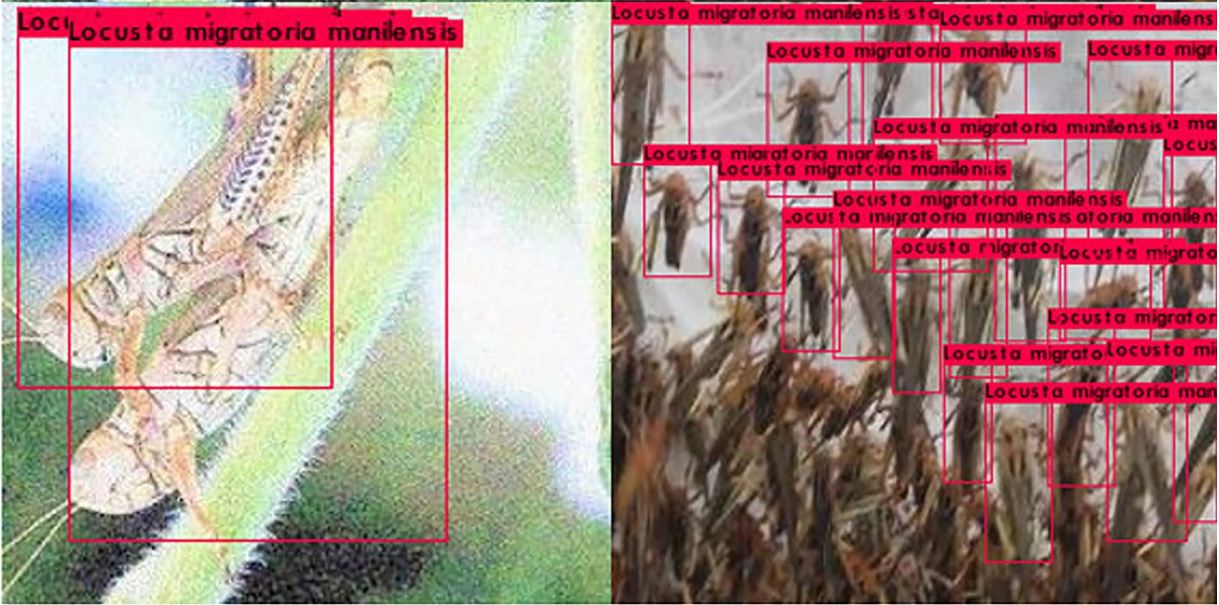

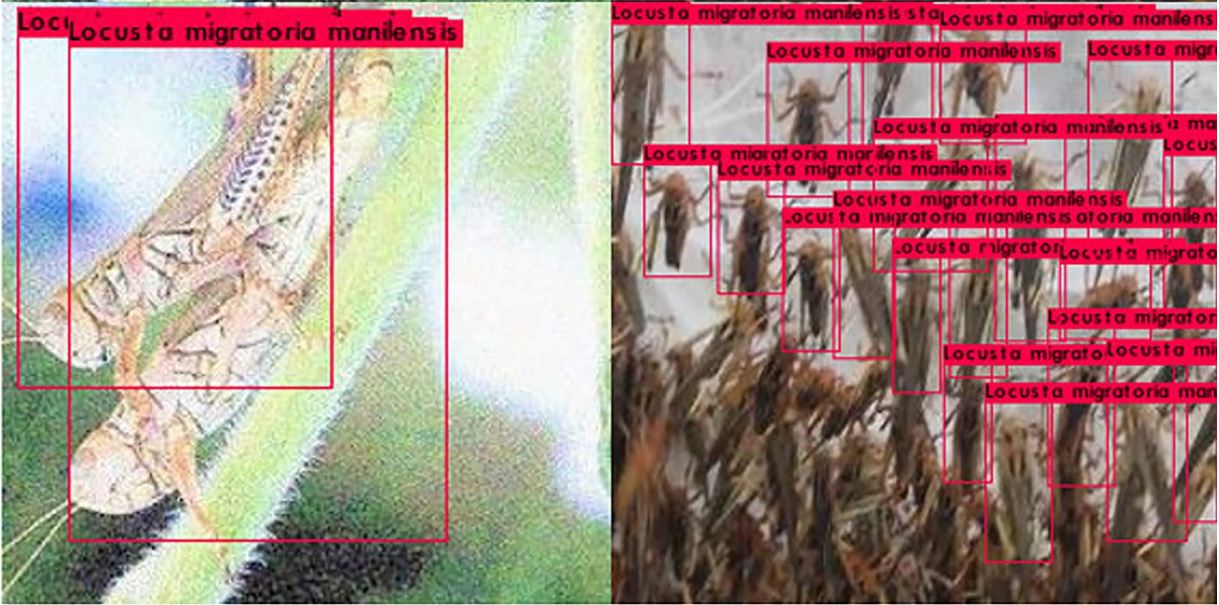

Silver fox detection utilizing YOLOv4 –supply - Pest Management: Correct and environment friendly real-time detection of orchard pests is essential to enhance the financial advantages of the fruit trade. Consequently, researchers skilled the YOLOv4 mannequin for the clever identification of agricultural pests. The mAP obtained was at 92.86%, and a detection time of 12.22ms, which is right for real-time detection.

Pest detection –supply - Poth Gap Detection: Pothole restore is a crucial problem and process in street upkeep, as handbook operation is labor-intensive and time-consuming. Consequently, researchers skilled YOLOv4 and YOLOv4-tiny to automate the inspection course of and acquired an mAP of 77.7%, 78.7%

Pothole detection utilizing YOLOv4 –supply - Prepare detection: Detection of a fast-moving prepare in real-time is essential for the protection of the prepare and folks round prepare tracks. A bunch of researchers constructed a customized object detection mannequin based mostly on YOLOv4 for fast-moving trains, and achieved an accuracy of 95.74%, with 42.04 frames per second, which suggests detecting an image solely takes 0.024s.

What’s Subsequent

On this weblog, we regarded into the structure of the YOLO v4 mannequin, and the way it permits coaching and operating object detection fashions utilizing a single GPU. Moreover, we checked out extra options the researchers launched termed as Bag of Freebies and Bag of Specials. General, the mannequin introduces three key options. Using the CSPDarkNet53 as spine, modified SSP, and PANet. Lastly, we additionally checked out how researchers have integrated the mode for numerous CV purposes.