In 2024 the Royal Swedish Academy of Sciences awarded the Nobel Prize in Physics to John J. Hopfield and Geoffrey E. Hinton, who’re thought of pioneers for his or her work in synthetic intelligence (AI). Physics is an attention-grabbing discipline and it has all the time been intertwined with groundbreaking discoveries that change our understanding of the universe and improve our know-how. John Hopfield is a physicist with contributions to machine studying and AI, Geoffrey Hinton, usually thought of the godfather of AI, is the pc scientist whom we will thank for the present developments in AI.

Each John Hopfield and Geoffrey Hinton performed foundational analysis on synthetic neural networks (ANNs). The Nobel Prize’s outstanding achievement comes from their analysis that enabled machine studying with ANNs, which allowed machines to be taught in new methods beforehand thought unique to people. On this complete overview, we are going to delve into the groundbreaking analysis of Hopfield and Hinton, exploring the important thing ideas of their analysis which have formed fashionable AI and earned them the distinguished Nobel Prize.

About us: Viso Suite is end-to-end pc imaginative and prescient infrastructure for enterprises. In a unified interface, companies can streamline the manufacturing, deployment, and scaling of clever, vision-based purposes. To begin implementing pc imaginative and prescient for enterprise options, ebook a demo of Viso Suite with our staff of specialists.

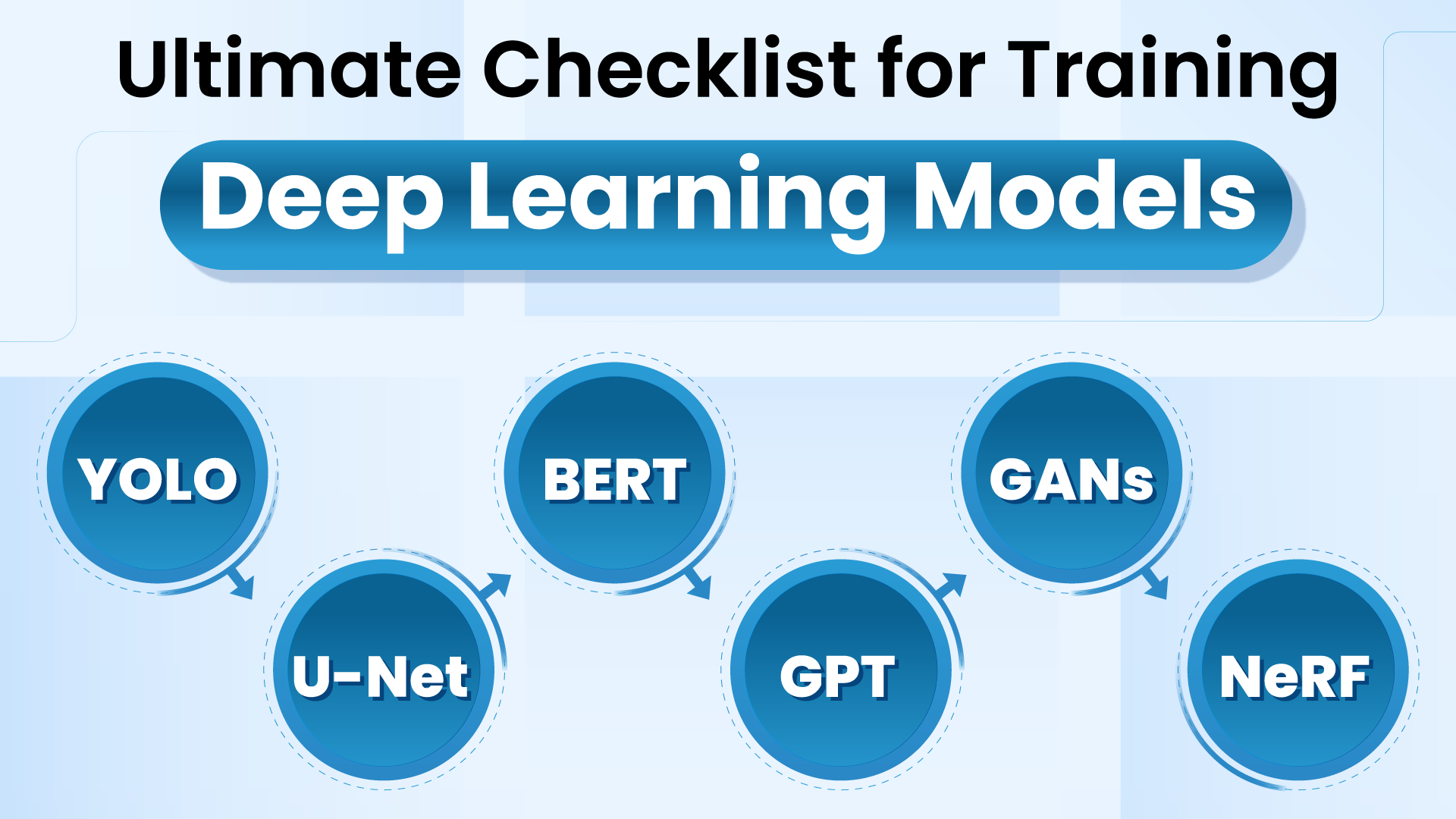

Evaluation of Synthetic Neural Networks (ANNs): The Basis of Fashionable AI

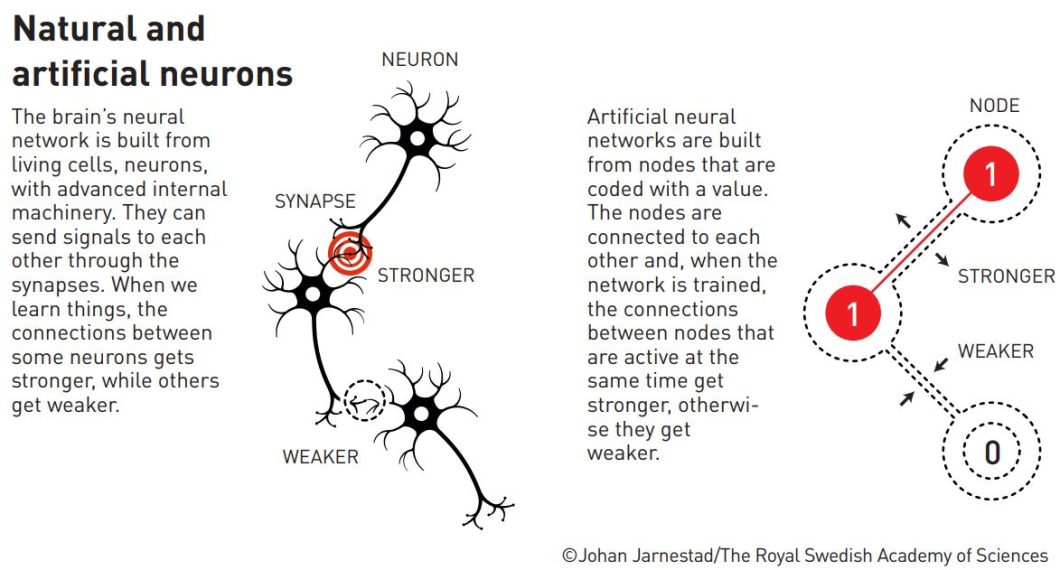

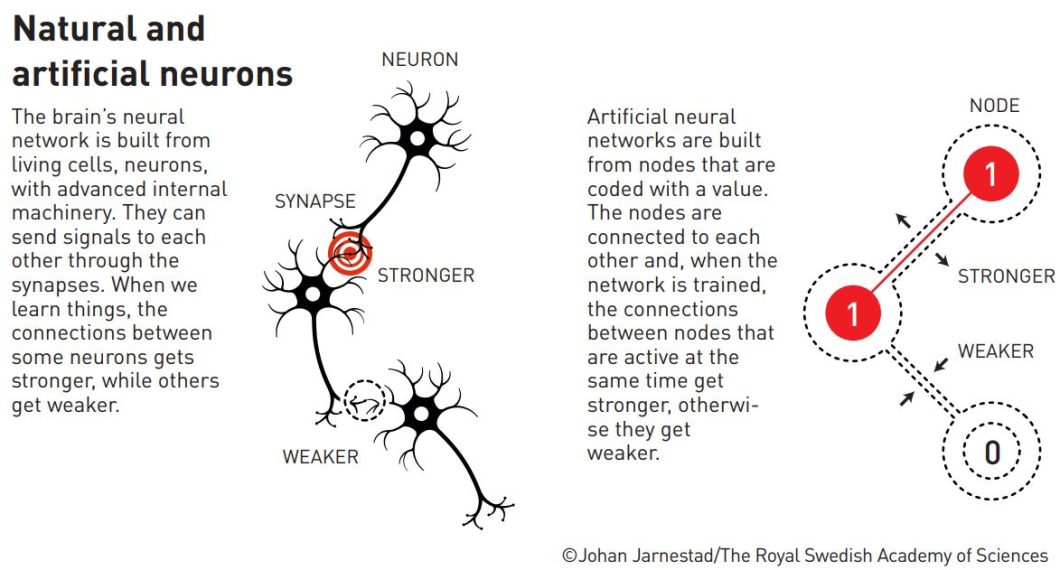

John Hopfield and Geoffrey Hinton made foundational discoveries and innovations that enabled machine studying with Synthetic Neural Networks (ANNs), which make up the constructing blocks for contemporary AI. Arithmetic, pc science, biology, and physics type the roots of machine studying and neural networks. For instance, the organic neurons within the mind encourage ANNs. Basically, ANNs are massive collections of “neurons”, or nodes, related by “synapses”, or weighted couplings. Researchers practice them to carry out sure duties somewhat than asking them to execute a predetermined set of directions. That is additionally just like spin fashions in statistical physics, utilized in theories like magnetism or alloy.

Analysis on neural networks and machine studying existed ever for the reason that invention of the pc. ANNs are product of nodes, layers, connections, and weights, the layers are product of many nodes with connections between them, and a weight for these connections. The info goes in and the weights of the connections change relying on mathematical fashions. Within the ANN space, researchers explored two architectures for techniques of interconnected nodes:

- Recurrent Neural Networks (RNNs)

- Feedforward neural networks

RNNs are a kind of neural community that takes in sequential information, like a time collection, to make sequential predictions, and they’re recognized for his or her “reminiscence”. RNNs are helpful for a variety of duties like climate prediction, inventory worth prediction, or these days deep studying duties like language translation, pure language processing (NLP), sentiment evaluation, and picture captioning. Feedforward neural networks however are extra conventional one-way networks, the place information flows in a single course (ahead) which is the other of RNNs which have loops. Now that we perceive ANNs let’s dive into John Hopfield and Geoffrey Hinton’s analysis individually.

Hopfield’s Contribution: Recurrent Networks and Associative Reminiscence

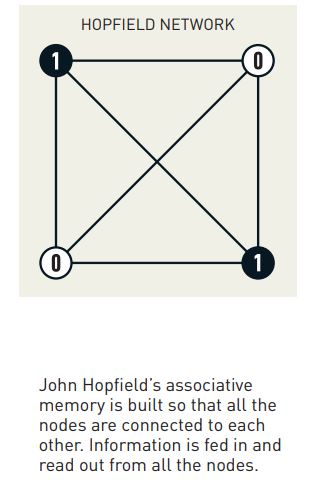

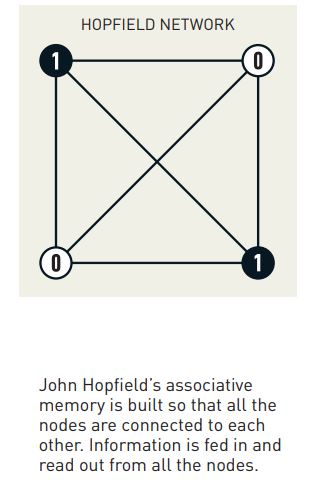

John J. Hopfield, a physicist in organic physics, printed a dynamical mannequin in 1982 for an associative reminiscence based mostly on a easy recurrent neural community. The straightforward memory-based RNN construction was new and influenced by his background in physics comparable to domains in magnetic techniques and vortices in fluid movement. RNN networks with loops permit data to persist and affect future computations, identical to a series of whispers the place every particular person’s whisper impacts the following.

Hopfield’s most important contribution was the event of the Hopfield Community mannequin, let’s take a look at that subsequent.

The Hopfield Community Mannequin

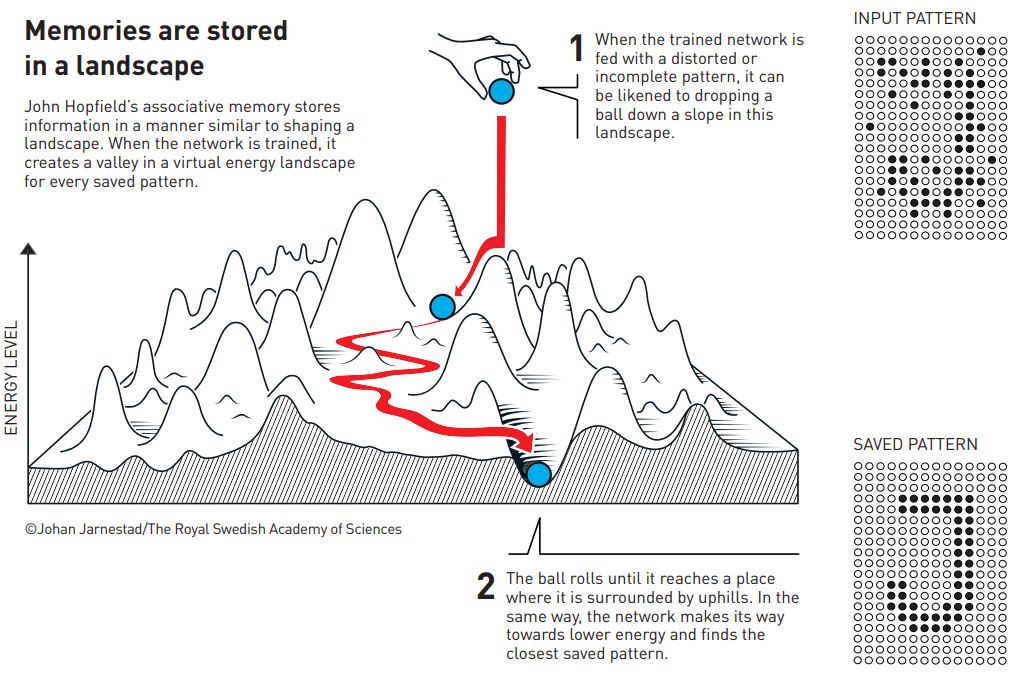

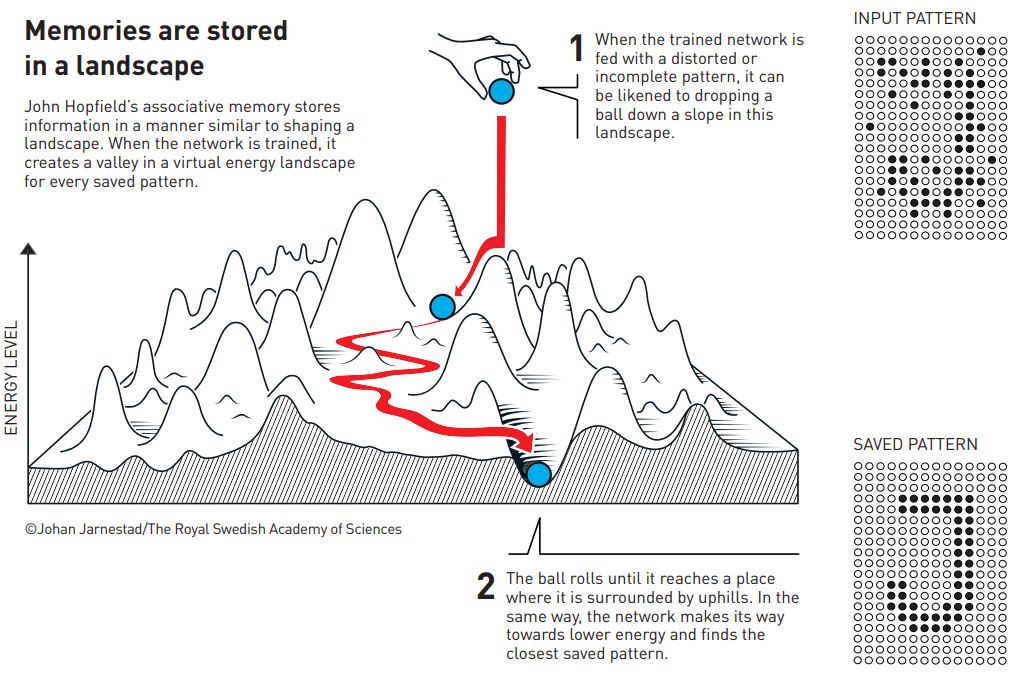

Hopfield’s community mannequin is associative reminiscence based mostly on a easy recurrent neural community. As we have now mentioned RNN consists of related nodes, however the mannequin Hopfield developed had a novel function referred to as an “vitality perform” which represents the reminiscence of the community. Think about this vitality perform like a panorama with hills and valleys. The community’s state is sort of a ball rolling on this panorama, and it naturally needs to settle within the lowest factors, the valleys, which signify steady states. These steady states are like saved recollections within the community.

The time period “associative reminiscence” on this community means it might probably hyperlink patterns into the precise steady state, even when distorted. It’s like recognizing a tune from only a few notes. Even should you give the community a partial or noisy enter, it might probably nonetheless retrieve the whole reminiscence, like filling within the lacking elements of a puzzle. This potential to recall full patterns from incomplete data makes the Hopfield Community a major contribution to the world of machine studying.

Purposes of Hopfield Networks

The Hopfield community influenced analysis throughout the pc science discipline to this present day. Researchers discovered purposes in varied areas, notably in sample recognition and optimization issues. John Hopfield networks can acknowledge photographs, even when they’re distorted or incomplete. They’re additionally helpful for search algorithms the place it is advisable discover the very best resolution amongst many prospects, like discovering the shortest route. The Hopfield community has been used to unravel frequent issues within the pc science discipline just like the touring salesman drawback, and utilizing its associative reminiscence for duties like picture reconstruction.

Hopfield’s work laid the muse for additional developments in neural networks, particularly in deep studying. His analysis impressed many others to discover the potential of neural networks, together with Geoffrey Hinton, who took these concepts to new heights along with his work on deep studying and generative AI. Subsequent, let’s dive into Hinton’s analysis and see why he’s the godfather of AI.

Hinton’s Contribution: Deep Studying and Generative AI

Geoffrey Hinton, a pioneer in AI, his analysis led to the present developments of synthetic neural networks. His analysis modified our perspective on how machines can be taught and paved the way in which for contemporary AI purposes which can be reworking industries. Hinton explored the potential of a number of varieties of synthetic neural networks and made important contributions to numerous architectures and coaching strategies that we’ll talk about on this part.

Hinton’s Work on Numerous ANN Architectures

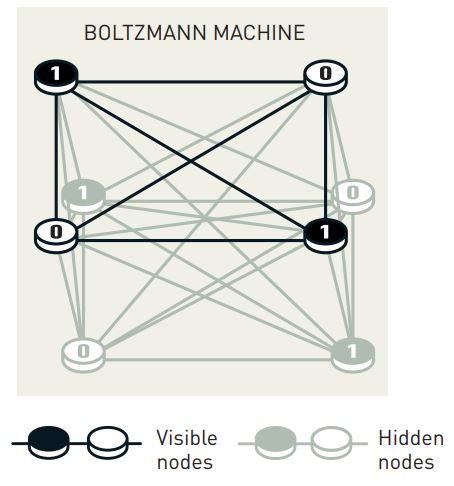

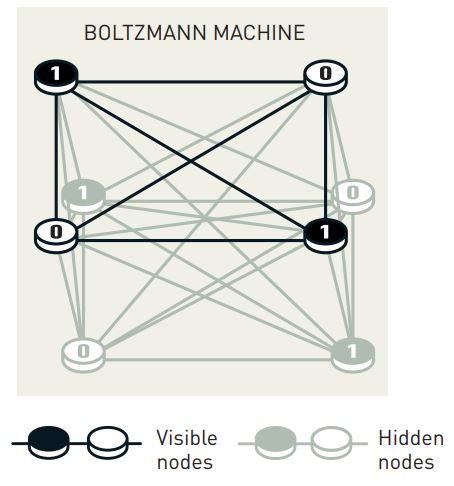

In 1983–1985 Geoffrey Hinton, along with Terrence Sejnowski and different coworkers developed an extension of Hopfield’s mannequin referred to as the Boltzmann machine. This can be a stochastic recurrent neural community however in contrast to the Hopfield mannequin, the Boltzmann machine is a generative mannequin. The Boltzmann machine is likely one of the earliest approaches to deep studying. It’s a sort of ANN that makes use of a stochastic (random) strategy to be taught the underlying construction of knowledge the place the nodes are just like the switches, they’re both seen (representing the enter information) or hidden (capturing inner representations). Think about it like a community of related switches, every randomly flipping between “on” and “off” states.

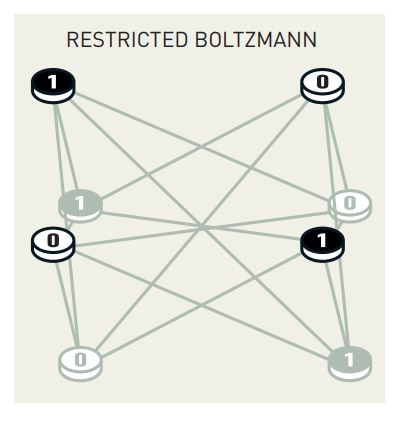

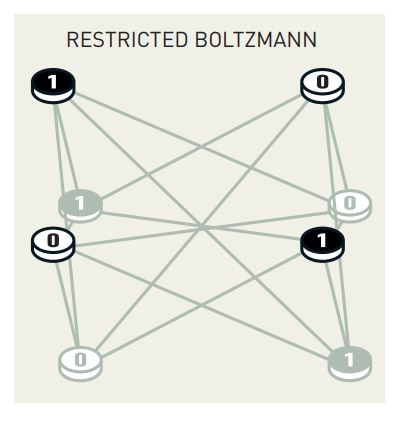

The Boltzmann machine nonetheless had the identical idea because the Hopfield mannequin the place it goals to discover a state of minimal vitality, which corresponds to the very best illustration of the enter information. This distinctive community structure on the time allowed it to be taught inner representations and even generate new samples from the realized information. Nevertheless, coaching these Boltzmann Machines could be fairly computationally costly. So, Hinton and his colleagues created a simplified model referred to as the Restricted Boltzmann Machine (RBM). The RBM is a slimmed-down model with fewer weights making it simpler to coach whereas nonetheless being a flexible software.

In a restricted Boltzmann machine, there are not any connections between nodes in the identical layer. This proved notably highly effective when Hinton later confirmed tips on how to stack them collectively to create highly effective multi-layered networks able to studying advanced patterns. Researchers incessantly use the machines in a series, one after the opposite. After coaching the primary restricted Boltzmann machine, the content material of the hidden nodes is used to coach the following machine, and so forth.

Backpropagation: Coaching AI Successfully

In 1986 David Rumelhart, Hinton, and Ronald Williams demonstrated a key development of how architectures with a number of hidden layers may very well be educated for classification utilizing the backpropagation algorithm. This algorithm is sort of a suggestions mechanism for neural networks. The target of this algorithm is to reduce the imply sq. deviation, between output from the community and coaching information, by gradient descent.

In easy phrases backpropagation permits the community to be taught from its errors by adjusting the weights of the connections based mostly on the errors it makes which improves its efficiency over time. Furthermore, Hinton’s work on backpropagation is important in enabling the environment friendly coaching of deep neural networks to this present day.

In the direction of Deep Studying and Generative AI

All of the breakthroughs that Hinton made along with his staff, had been quickly adopted by profitable purposes in AI, together with sample recognition in photographs, languages, and scientific information. A type of developments was Convolutional Neural Networks (CNNs) which had been educated by backpropagation. One other profitable instance of that point was the lengthy short-term reminiscence methodology created by Sepp Hochreiter and Jürgen Schmidhuber. This can be a recurrent community for processing sequential information, as in speech and language, and could be mapped to a multilayered community by unfolding in time. Nevertheless, it remained a problem to coach deep multilayered networks with many connections between consecutive layers.

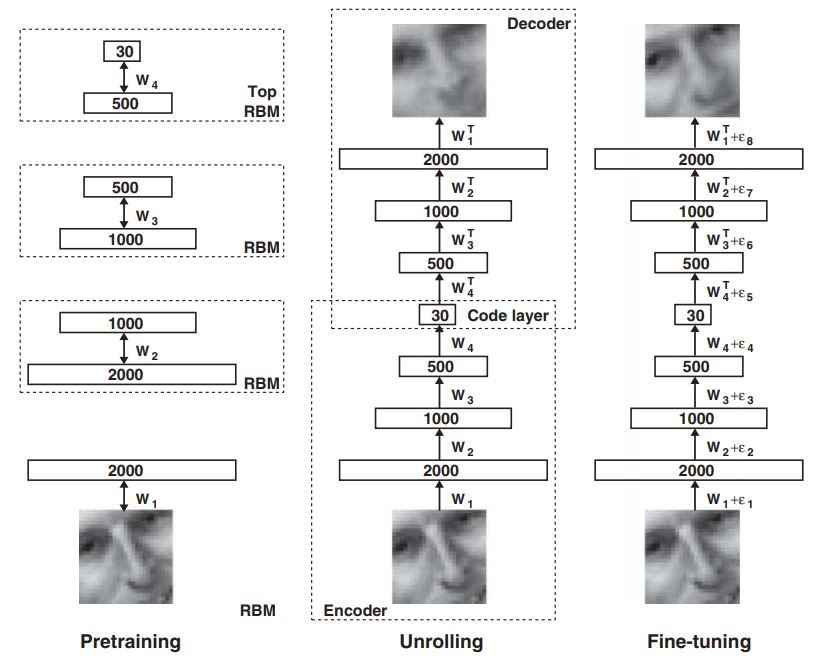

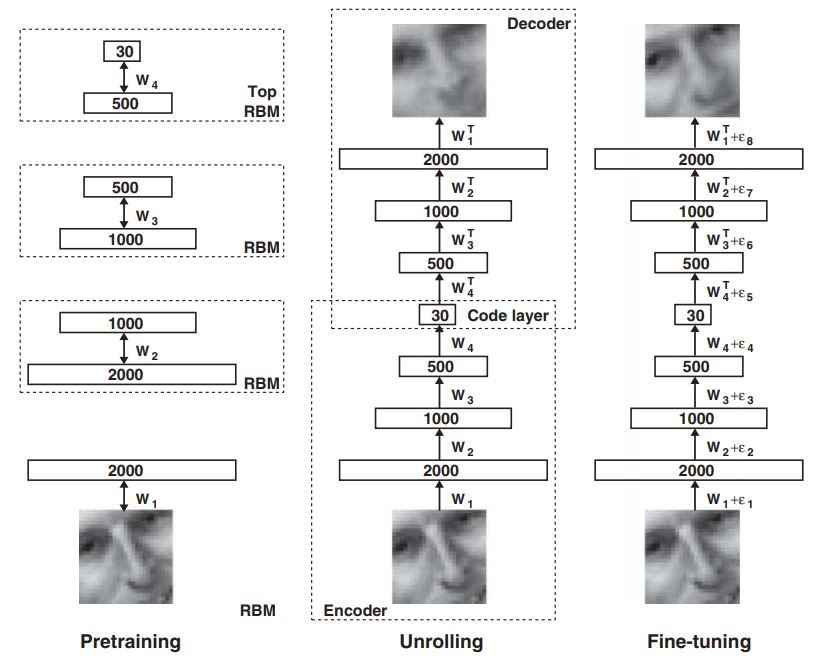

Hinton was the main determine in creating the answer and an necessary software was the restricted Boltzmann machine (RBM). For RBMs, Hinton created an environment friendly approximate studying algorithm, referred to as contrastive divergence, which was a lot quicker than that for the total Boltzmann machine. Different researchers then developed a pre-training process for multilayer networks, during which the layers are educated one after the other utilizing an RBM. An early software of this strategy was an autoencoder community for dimensional discount.

Following pre-training, it turned doable to carry out a worldwide parameter finetuning utilizing the backpropagation algorithm. The pre-training with RBMs recognized buildings in information, like corners in photographs, with out utilizing labeled coaching information. Having discovered these buildings, labeling these by backpropagation turned out to be a comparatively easy activity. By linking layers pre-trained on this method, Hinton was capable of efficiently implement examples of deep and dense networks, an incredible achievement for deep studying. Now, let’s transfer on to discover the impression of Hinton and Hopfield’s analysis and the longer term implications of their work.

The Affect of Hopfield and Hinton’s Analysis

The groundbreaking analysis of Hopfield and Hinton has had a deep impression on the sphere of AI, their work superior the speculation foundations of neural networks and led to the capabilities that AI has in the present day. Picture recognition, for instance, has been vastly enhanced by their work, permitting for duties like object detection, faces, and even feelings. Pure language processing (NLP) is one other space, because of their contributions, we now have fashions that may perceive and generate human-like textual content, enabling standard purposes just like the GPTs.

The listing of purposes utilized in on a regular basis life based mostly on ANNs is lengthy, these networks are behind virtually every part we do with computer systems. Nevertheless, their analysis has a broader impression on scientific discoveries. In fields like physics, chemistry, and biology, researchers use AI to simulate experiments and design new medication and supplies. In astrophysics and astronomy, ANNs have additionally change into a regular information evaluation software the place we just lately used them to get neutrino picture of the Milky Means.

Determination assist inside well being care can be a well-established software for ANNs. A latest potential randomized research of mammographic screening photographs confirmed a transparent good thing about utilizing machine studying in enhancing the detection of breast most cancers or movement correction for magnetic resonance imaging (MRI) scans.

The Future Implications of the Nobel Prize in Physics 2024

The long run implications of John J. Hopfield and Geoffrey E. Hinton’s analysis are huge. Hopfield’s analysis on recurrent networks and associative reminiscence laid the foundations and Hinton’s additional exploration of deep studying and generative AI has led to the event of highly effective AI techniques. Furthermore, As AI continues to evolve, we will count on much more groundbreaking analysis and transformative purposes. Their work has laid the muse for a future the place AI might help clear up the world’s most urgent challenges. The 2024 Nobel Prize in Physics is a testomony to their outstanding achievements and their lasting impression on AI. Nevertheless, you will need to think about that as we proceed to develop and deploy AI techniques, we should use them ethically and responsibly to learn us and the planet.

FAQs

Q1. What are synthetic neural networks (ANNs)?

The organic neural networks within the human mind impressed the structure of ANNs. They encompass related nodes organized in layers, with weighted connections between them. Studying happens by adjusting these weights based mostly on the community coaching information.

Q2. What’s deep studying?

Deep studying is a subfield of machine studying that makes use of ANNs with a number of hidden layers to be taught advanced patterns and representations from enter information.

Q3. What’s generative AI?

Generative AI are AI techniques that may generate new content material. These techniques be taught the patterns and buildings of the enter information after which use this information to create new and authentic content material.

This autumn. What’s the significance of Hopfield and Hinton’s analysis?

Hopfield and Hinton’s analysis has been foundational within the growth of contemporary AI. Their work led to the sensible purposes we have now in the present day for AI.